Defined problem framing and interaction architecture, built scenario development, drove hardware UX strategy, led DOI submission end-to-end.

Context-Aware Gesture System for Smart Glasses

A gesture is a signal the system interprets through environment, user state, and learned behavior — before any action is taken.

Gestures in XR are treated as commands.

This breaks in real life — where context, environment, and user state constantly change.

This project reframes gestures as signals, interpreted by the system rather than mapped to fixed actions.

Current XR systems map a small set of gestures to fixed commands. This works in controlled demos, but fails in real-world use — where intent, environment, and social context vary constantly.

This work defines a system that interprets gestures in context — combining movement, environment, and user state to infer intent and generate appropriate responses.

1 co-inventor (PM, 30% contribution)

Smart glasses (audio, display, AR), VR HMD

Patent filed, 2025 · A1 graded

Highest commercial viability rating.

The interaction system for smart glasses must be a continuous inference engine.

Problem

Current XR gesture systems rely on fixed vocabularies — small sets of predefined commands that users must learn and adapt to. This breaks down in real-world use, where context, habit, and environment constantly change. When a gesture doesn’t match the predefined vocabulary, it fails — and the user adapts instead. The core problem is not gesture recognition, it's interpretation.

Approach

Instead of mapping gestures to fixed functions, the system interprets them through a continuous loop: sensing context, inferring intent, acting, and learning over time. Gestures are not inputs to execute — they are signals the system resolves into appropriate responses.

01

Sense

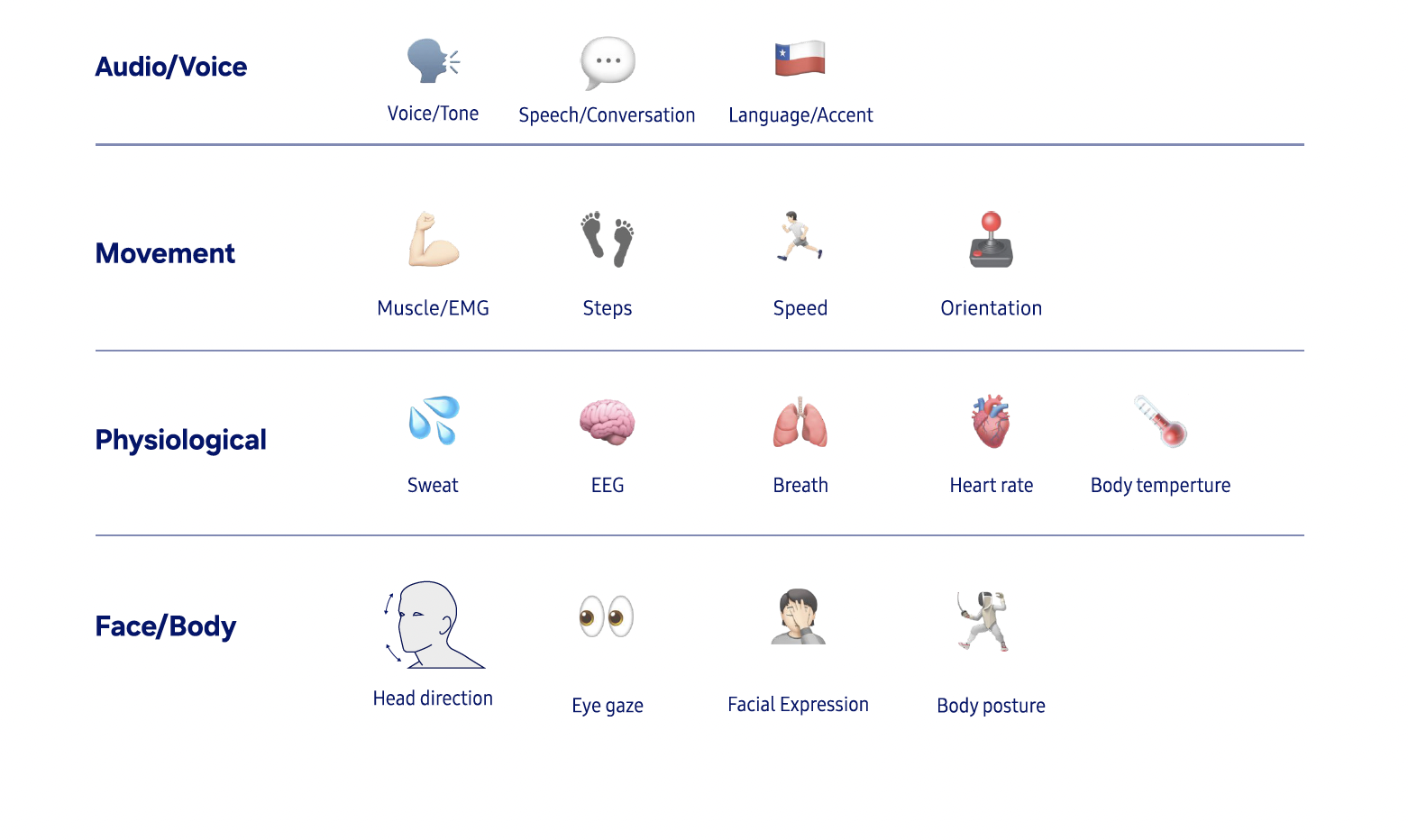

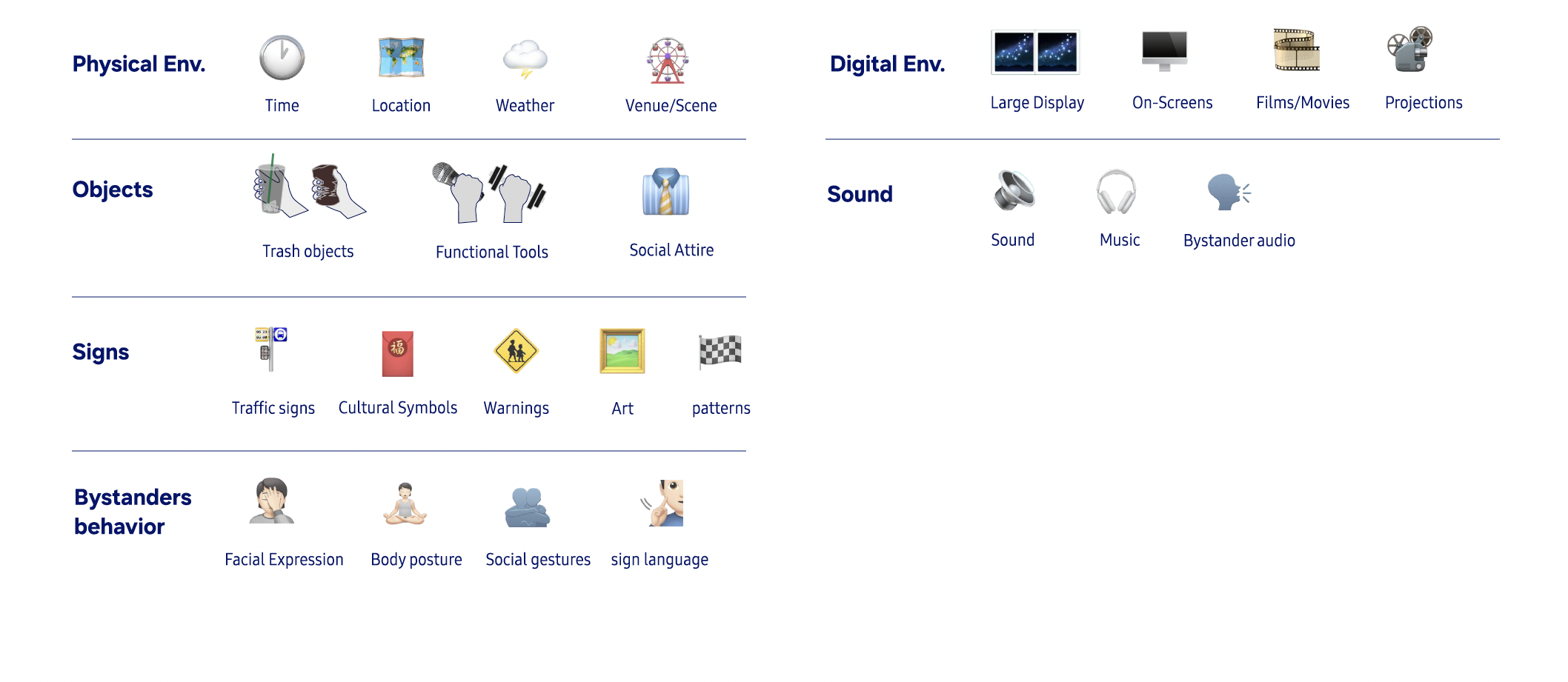

Capture multimodal signals: body movement, environment, and device context

02

Infer

Interpret user intent based on context, history, and situation

03

Act

Generate adaptive responses across the appropriate surface and modality.

04

Learn

Update the system’s model from each interaction.

The system builds understanding progressively — combining gesture, context, and user history into a single decision loop.

Key Design Decisions

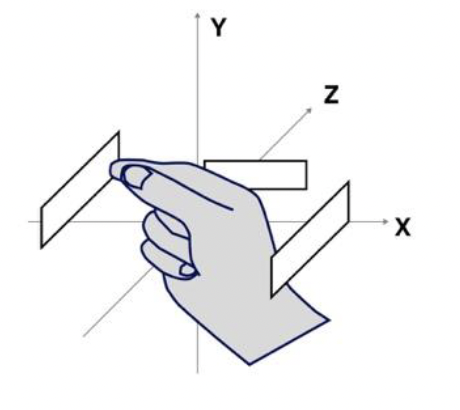

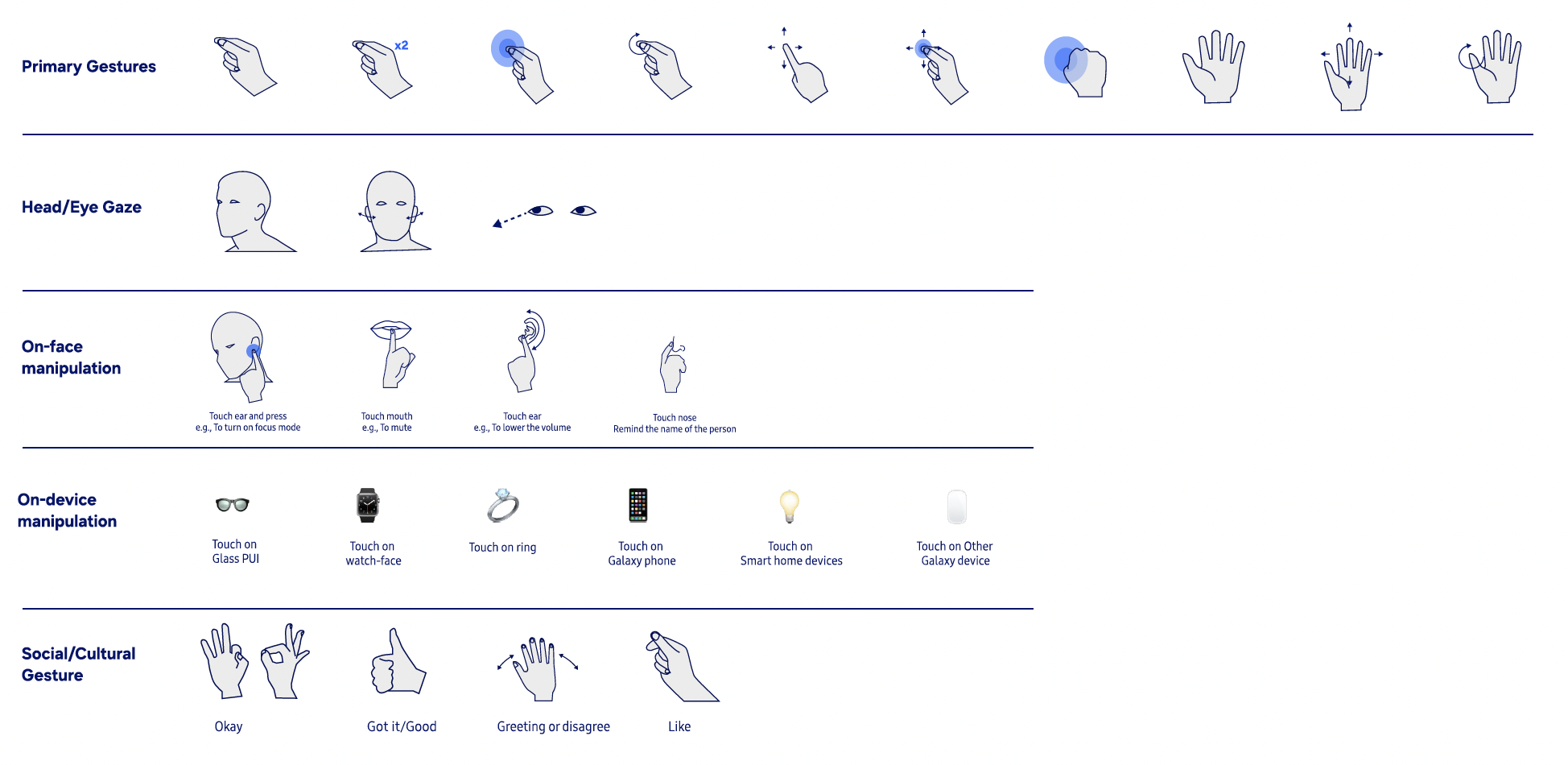

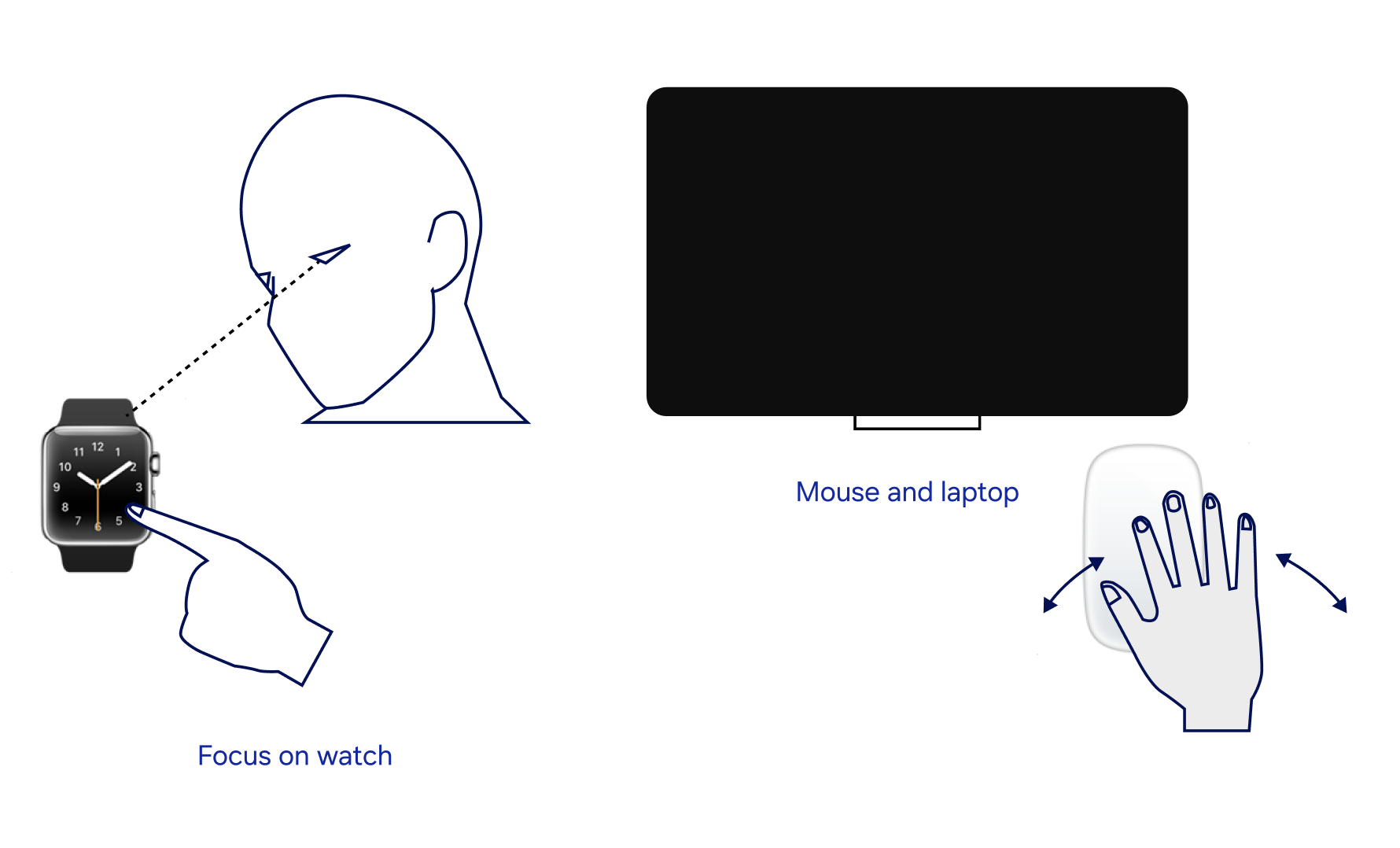

Gesture is interpreted alongside gaze, motion, proximity, and environment.

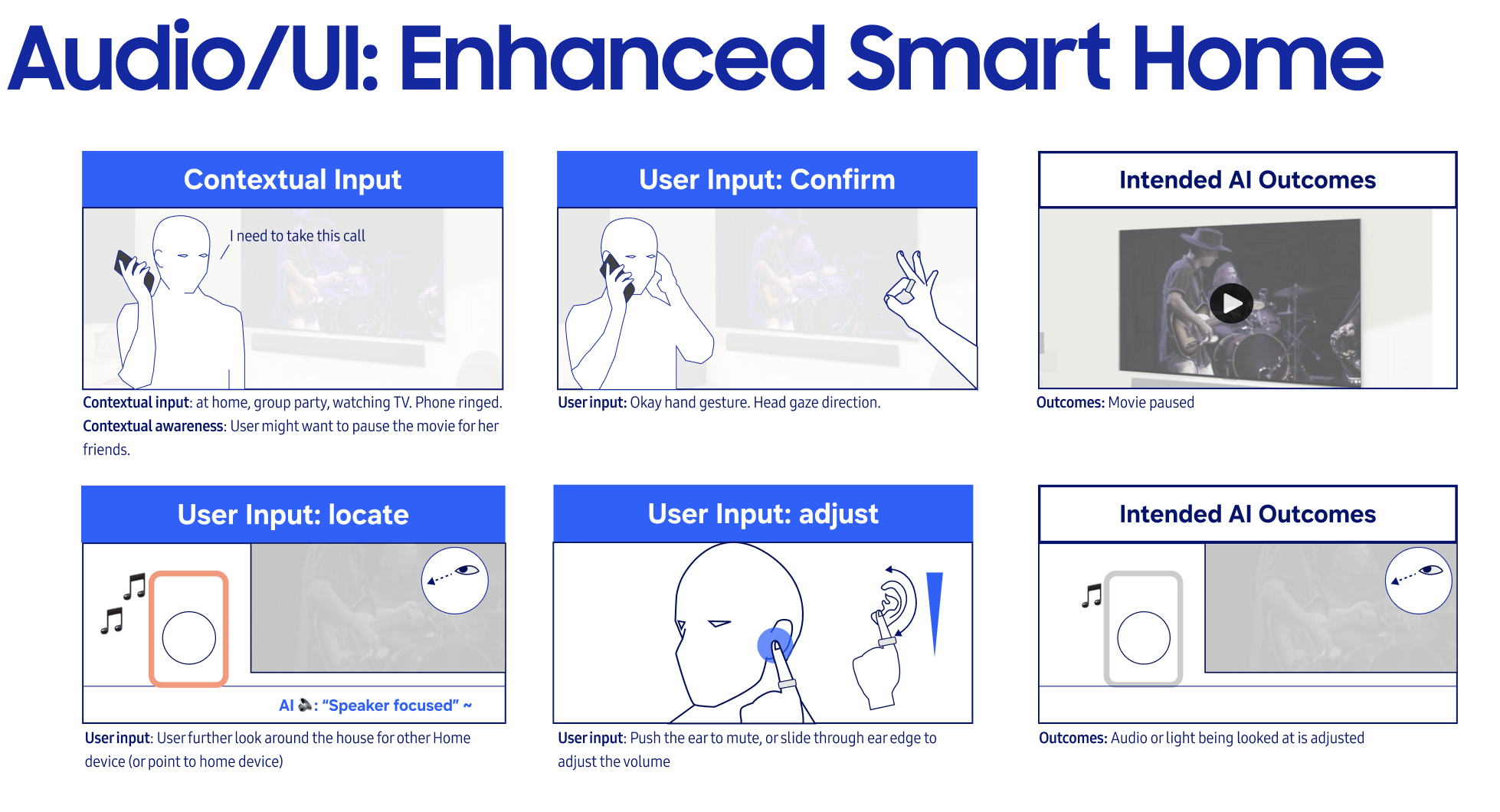

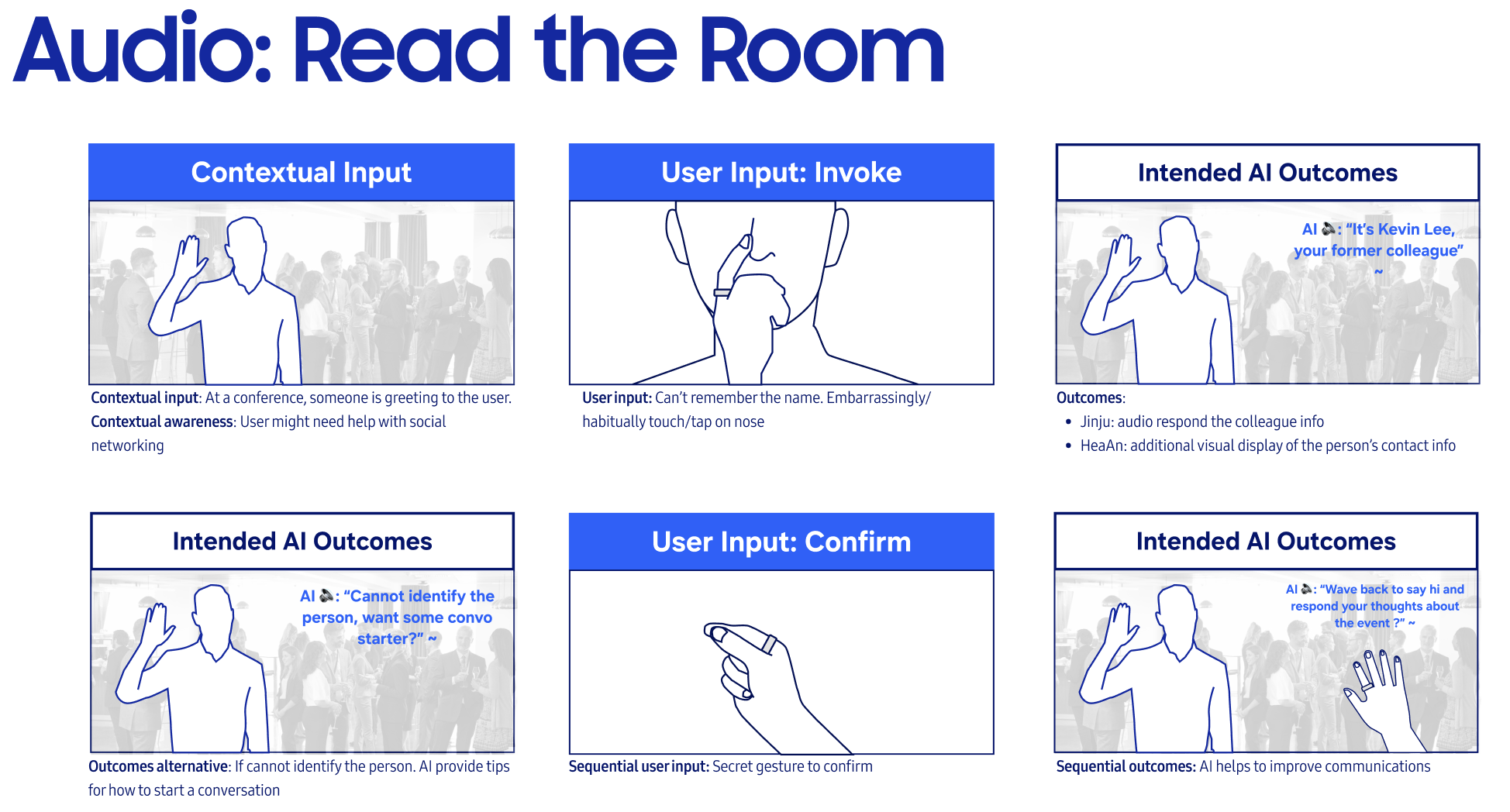

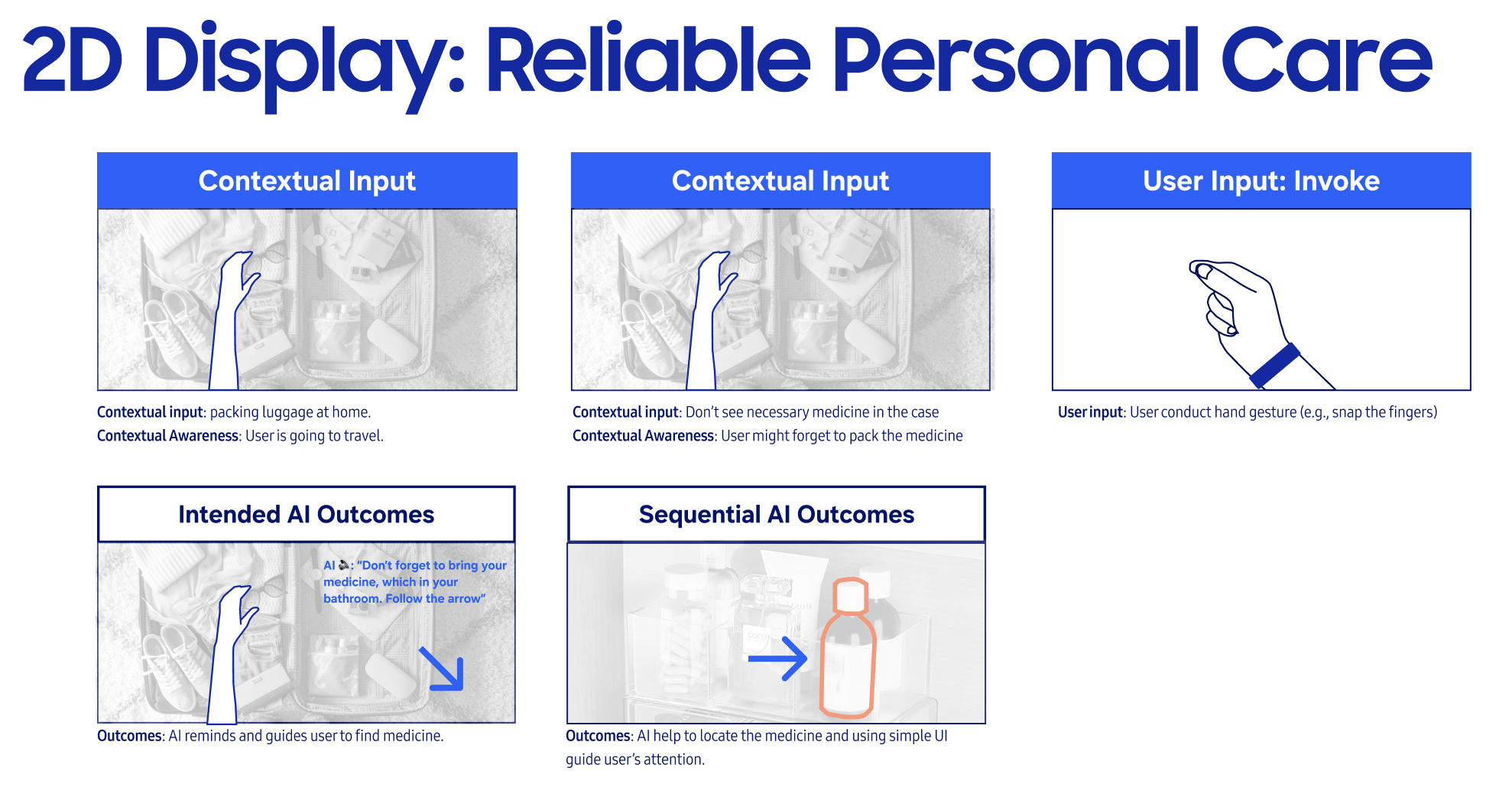

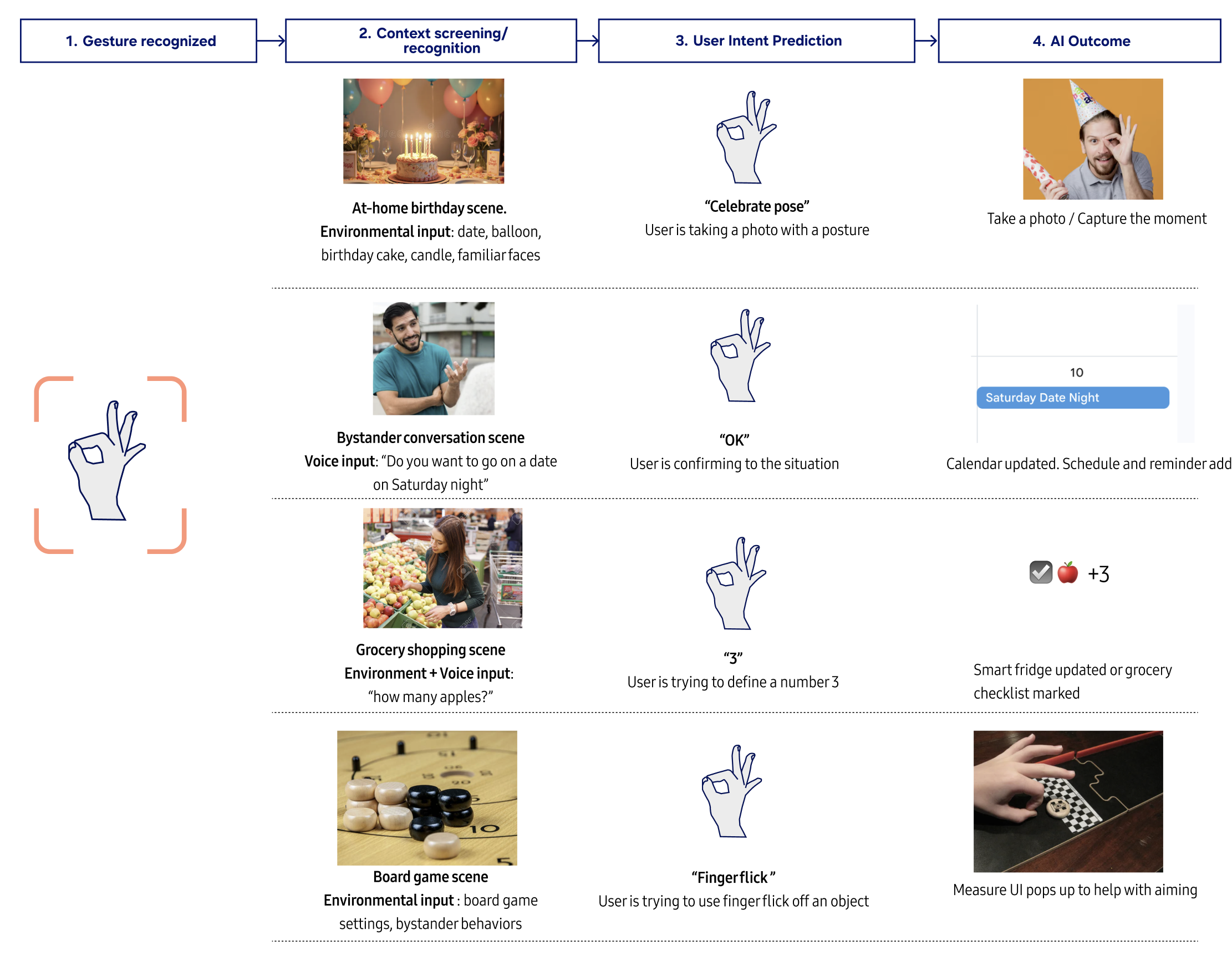

The same gesture produces different outcomes depending on environment, user state, and intent. The same gesture could mean different things under different contexts. Taking the sign gesture of "OK" as an example.

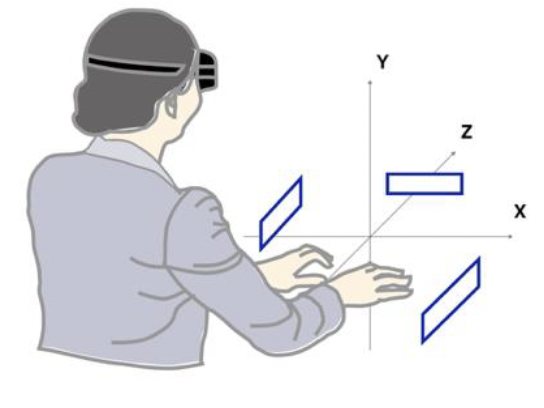

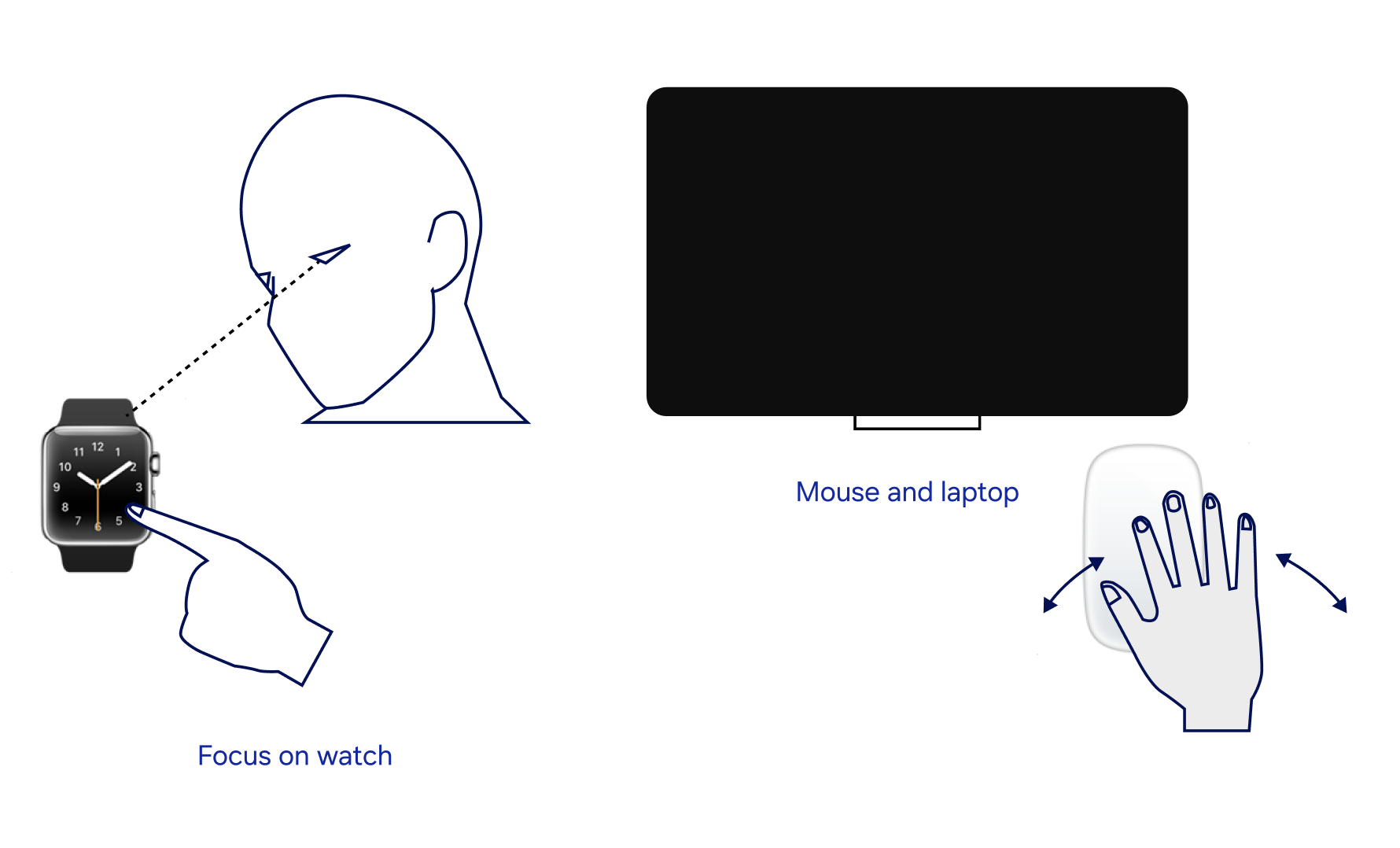

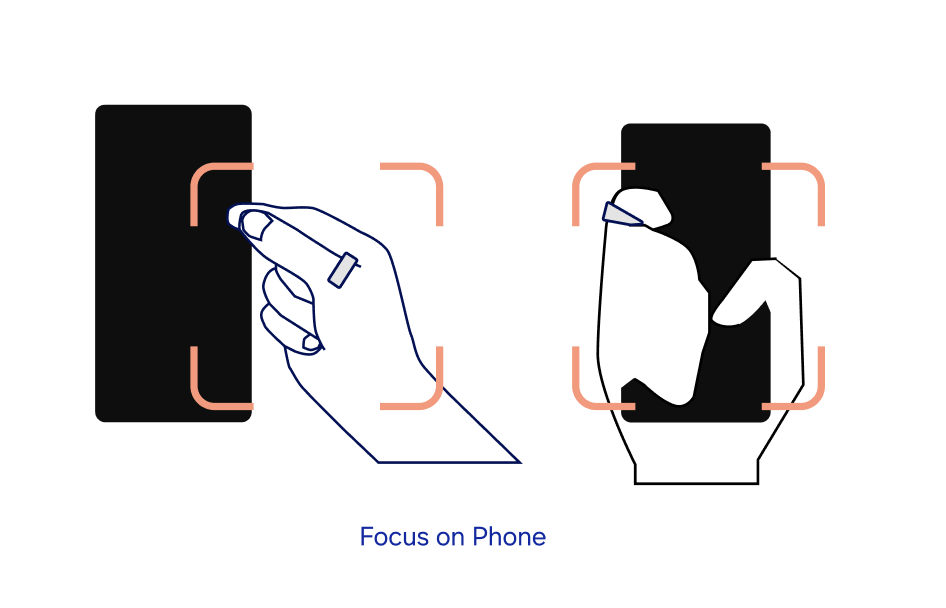

Output can also shift across the ecosystem. The system can route multimodal feedback to the surface that best fits the situation. Output device each can deliver different parts of the response, with the system choosing automatically to the surface that best fits the situation or letting the user override the target surface through behavior.

01

Context-aware distributed

02

Intention guided overrides

03

Hybrid

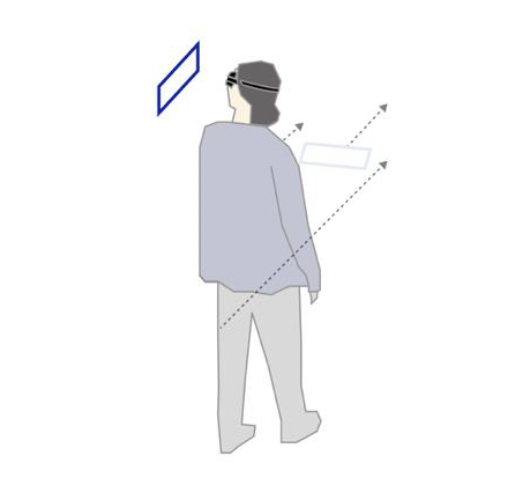

As the system learns, interaction compresses — moving from reactive to anticipatory.

Apply to user scenarios

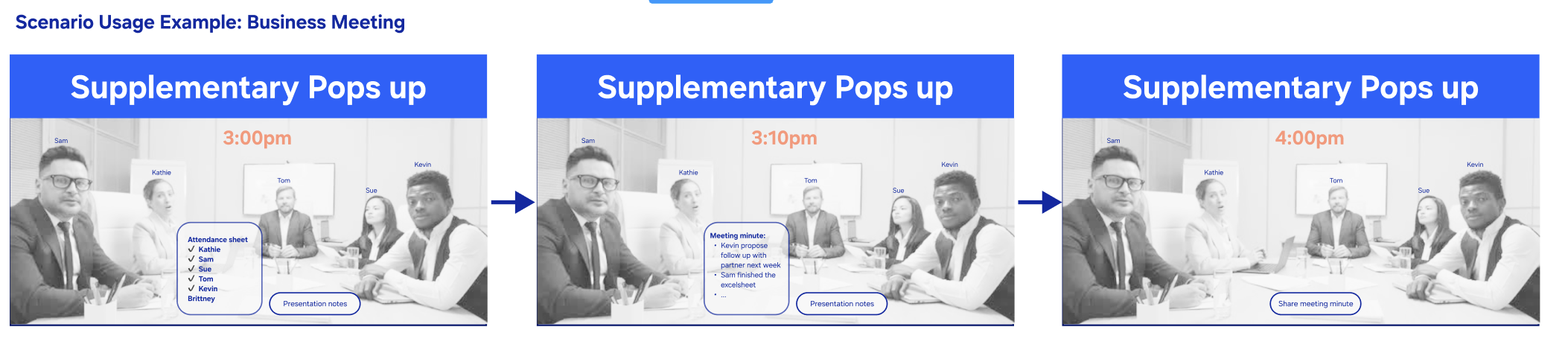

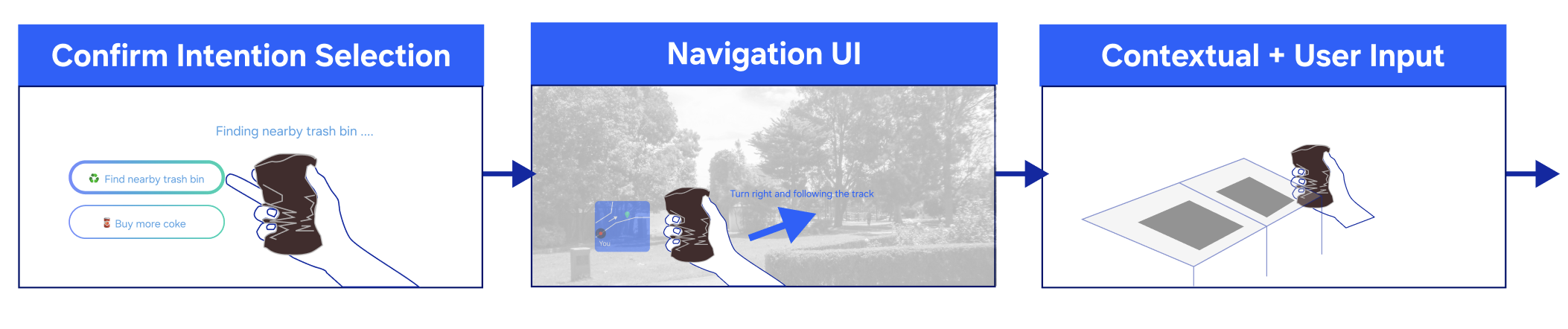

The system resolves intent by combining gesture with environmental and behavioral signals, rather than mapping gestures to fixed commands. The system can be applied to a wide range of scenarios beyond the core flows.

Key interfaces categories

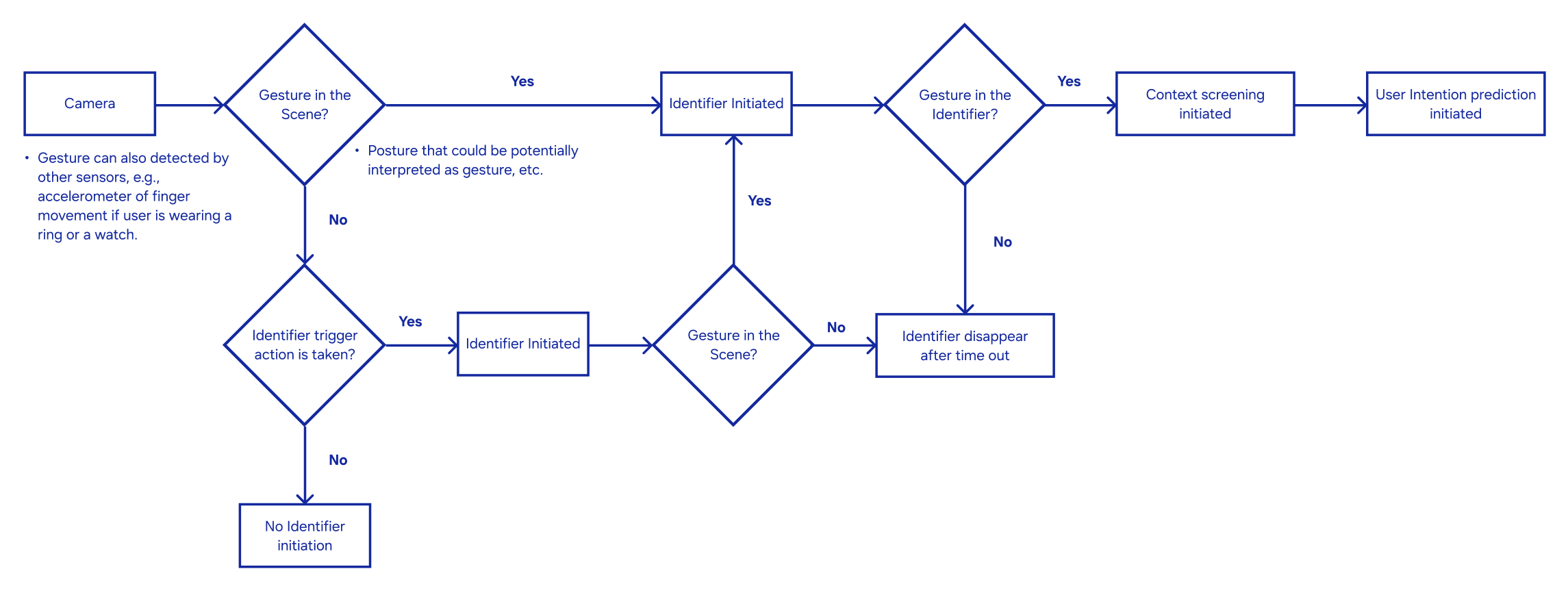

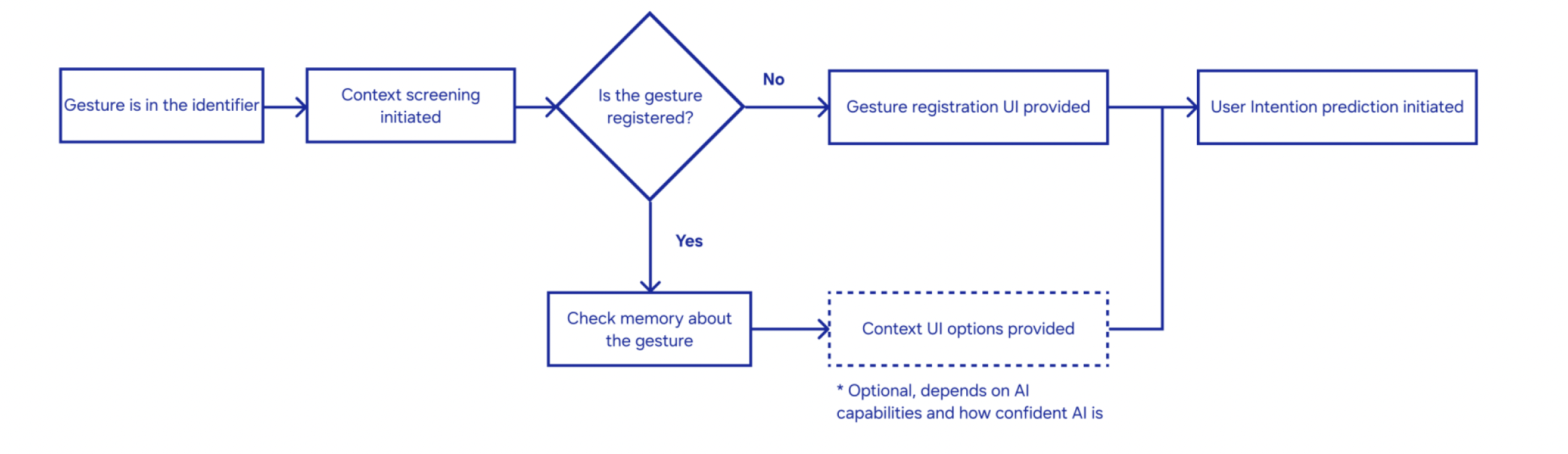

The system operates as a staged loop: detect gesture, interpret context, predict intent, and respond. It extends beyond recognition — deciding when to ask, suggest, guide, or act based on confidence.

01

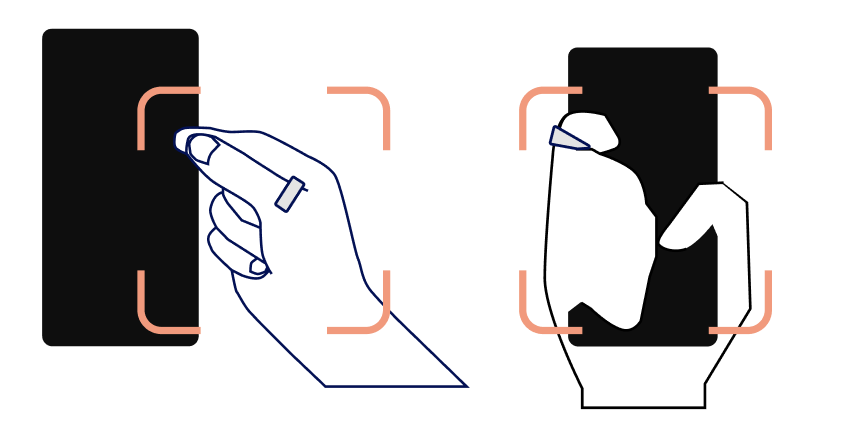

Gesture identifier & feedback

A visual cue confirms input immediately — whether the gesture is recognized or not — enabling lightweight, continuous onboarding.

02

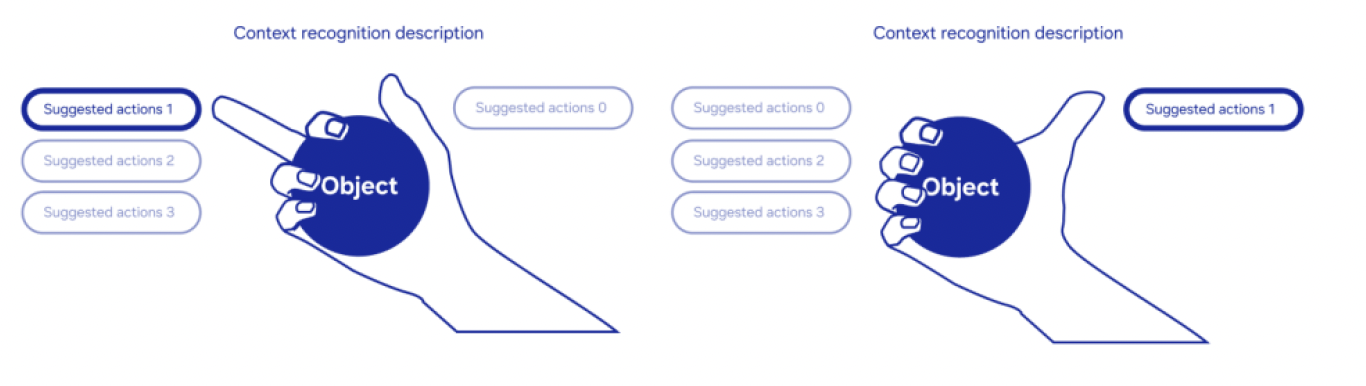

Ergonomic context option UI

Relevant actions appear around the hand, adapting to posture and movement without disrupting focus.

03

Learning from unregistered gestures

Repeated unknown gestures trigger suggestions, allowing the system to learn incrementally from user behavior.

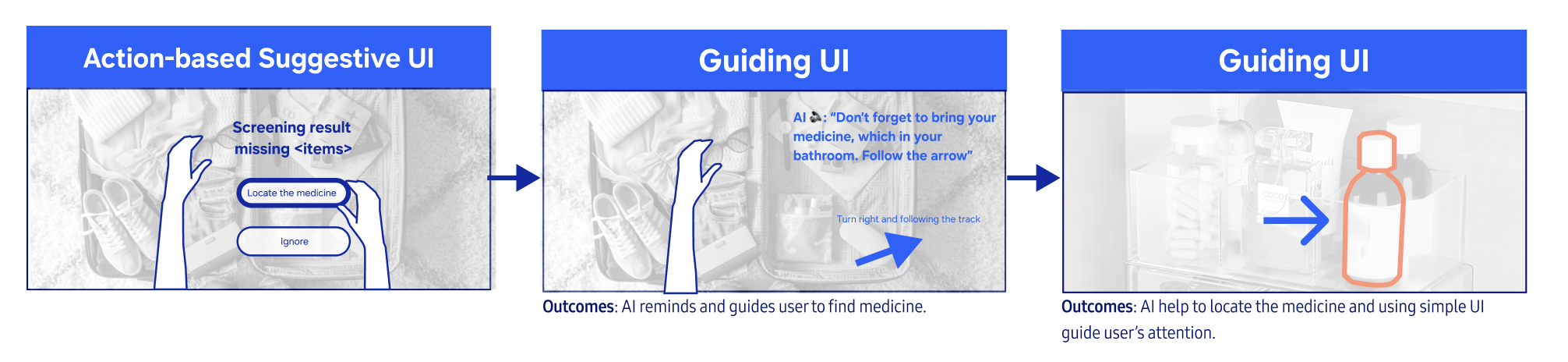

04

Guidance-based output

The system responds with contextual guidance or actions in real time, depending on confidence.

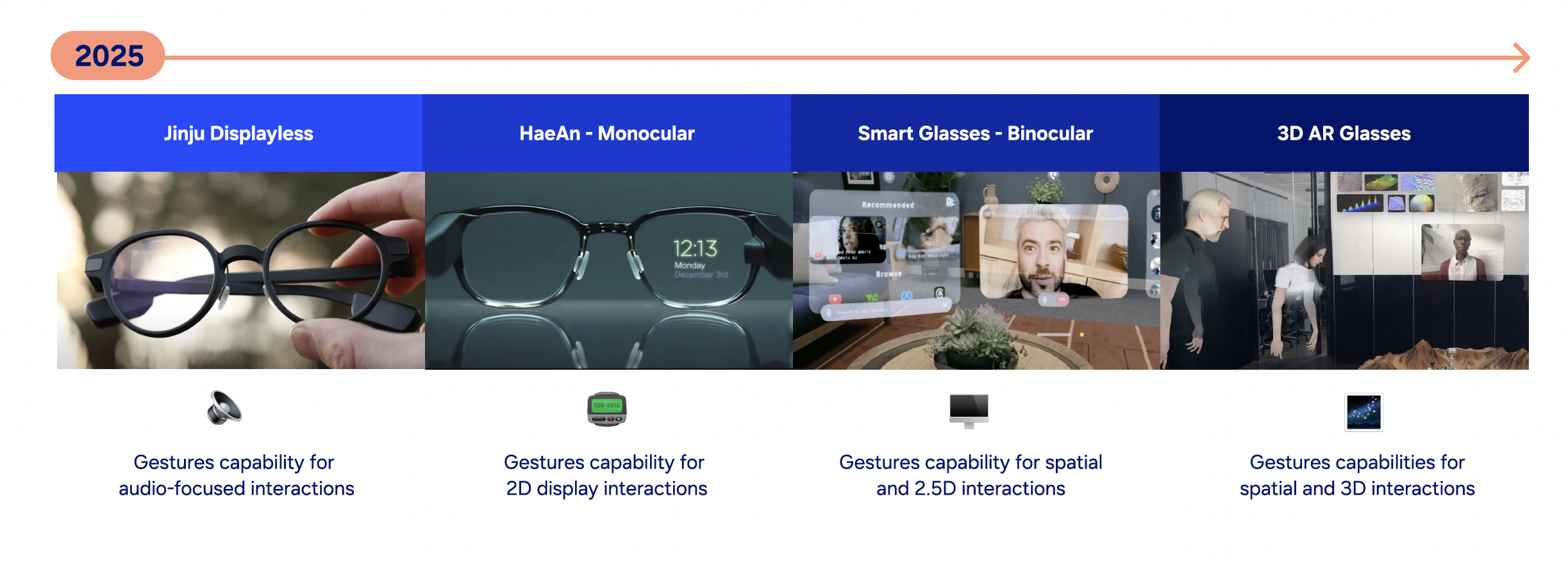

Hardware spectrum

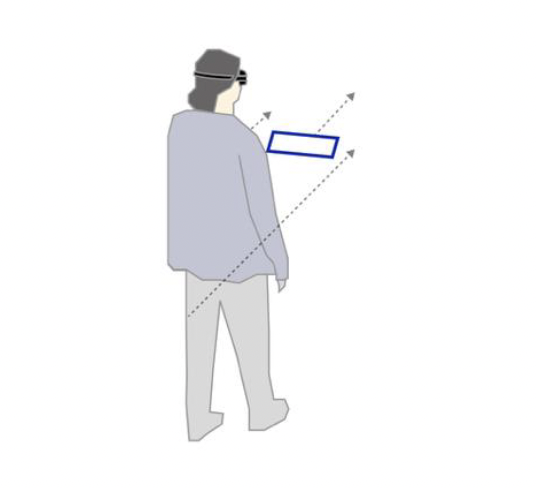

The system was designed to scale across devices — from audio-only glasses to full AR headsets — adapting input and output to each hardware capability..

Tier 1

Displayless

Audio + minimal input — gestures interpreted through context and history.

Tier 2

Monocular

Limited display — gestures guide lightweight visual responses.

Tier 3

Binocular

Spatial awareness — gestures interact with environment and depth.

Tier 4

Full AR

Full multimodal system — gestures operate in 3D space with adaptive feedback.

Impact and Outcome

01

Invention

The method was formalized as an A1-graded Disclosure of Invention — the highest commercial viability rating — establishing a foundation for adaptive, personalized interaction systems across XR platforms.

02

System contribution

The project reframes gesture interaction from command input to contextual inference, and defines a framework that scales from displayless devices to full AR systems.

03

Outlook

This system sets an input architecture for agentic experience onward by operating as a continuous loop - perception, inference, action, feedback, and learning. As confidence grows, explicit steps compress, and interaction shifts from reactive to anticipatory.

Next project